How to Backup and Migrate Your Centmin Mod Server

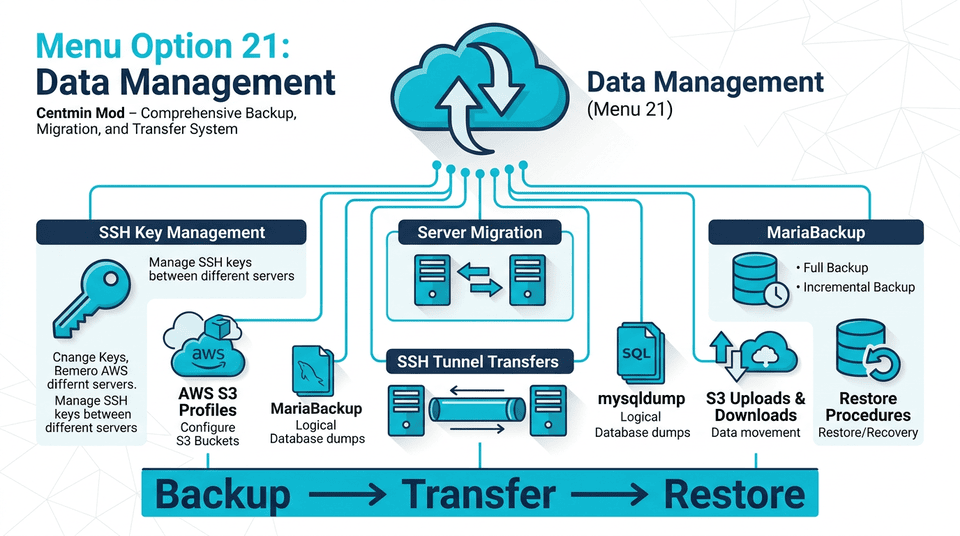

To backup your Centmin Mod server and migrate to a new server, use centmin.sh menu option 21 (Data Management). This is the primary Centmin Mod backup and server migration tool. Menu option 21 provides all the tools needed for full server backups, MariaDB database backups, and server-to-server migration via SSH tunnels or S3-compatible storage (rsync, MariaBackup, mysqldump).

Launch menu option 21 by running the centmin command and selecting option 21:

centmin

# Select menu option 21 for Data Management

Key tools available in menu option 21:

- backups.sh — Full server file and database backups (MariaBackup for hot backups + mysqldump for logical backups)

- tunnel-transfers.sh — Server-to-server migration via SSH tunnels for transferring website files and databases to a new server

- keygen.sh — SSH key generation and management for passwordless authentication between servers

- awscli-get.sh — AWS CLI S3 profile setup for uploading and downloading backups to/from S3-compatible storage

Overview

Menu option 21 in centmin.sh provides a comprehensive data management system for backup, migration, and transfer operations. The underlying scripts are located at /usr/local/src/centminmod/datamanagement/.

Data Management Submenu

Access via centmin.sh → Option 21. Provides 14 submenu options covering SSH keys, S3 profiles, backups, migrations, and transfers.

--------------------------------------------------------

Centmin Mod Data Management

--------------------------------------------------------

1). Manage SSH Keys

2). Manage AWS CLI S3 Profile Credentials

3). Migrate Centmin Mod Data To New Centmin Mod Server

4). Backup Nginx Vhosts Data + MariaBackup MySQL Backups

5). Backup Nginx Vhosts Data Only

6). Backup MariaDB MySQL With MariaBackup Only

7). Backup MariaDB MySQL With mysqldump Only

8). Transfer Directory Data To Remote Server Via SSH

9). Transfer Directory Data To S3 Compatible Storage

10). Transfer Files To S3 Compatible Storage

11). Download S3 Compatible Stored Data To Server

12). S3 To S3 Compatible Storage Transfers

13). List S3 Storage Buckets

14). Back to Main menu

--------------------------------------------------------

At-a-Glance Quick Reference

| Option | Name | Purpose |

|---|---|---|

| 1 | Manage SSH Keys | Create, register, rotate, delete, export, backup SSH keys |

| 2 | Manage AWS CLI S3 Profiles | Create, edit, delete, export S3 storage profiles |

| 3 | Migrate to New Server | Complete server migration with backup + transfer |

| 4 | Vhosts + MariaBackup | Full backup with binary database (physical copy) |

| 5 | Vhosts Only | Website files backup (no database) |

| 6 | MariaBackup Only | Binary database backup (same-version restore) |

| 7 | mysqldump Only | SQL dump backup (cross-version compatible) |

| 8 | SSH Transfer | High-speed zstd-compressed tunnel via nc/socat |

| 9–12 | S3 Operations | Upload, download, sync, and cross-S3 transfers |

| 13 | List S3 Buckets | View S3 buckets for configured profiles |

Quick Start Guide

Before using menu option 21, verify these prerequisites:

| Requirement | Verification | Expected |

|---|---|---|

| CSF Firewall whitelist | csf -a REMOTE_IP | IP added to allow list |

| SSH key authentication | ssh -i /root/.ssh/my1.key root@REMOTE_IP | Login without password |

| AWS CLI (for S3) | aws --version | Version number displayed |

| Sufficient disk space | df -h /home | At least 2x backup size |

| MariaDB running | systemctl status mariadb | Active (running) |

Quick Command Reference

| Task | Command |

|---|---|

| Full backup (Vhosts + MariaBackup) | backups.sh backup-all-mariabackup comp |

| Vhosts only backup | backups.sh backup-files comp |

| MariaBackup only | backups.sh backup-mariabackup comp |

| mysqldump only | backups.sh backup-all comp |

| Transfer via SSH | tunnel-transfers.sh -p 22 -u root -h REMOTE_IP -m nc -s /path -r /dest -k /root/.ssh/my1.key |

| Upload to S3 | aws s3 sync --profile PROFILE --endpoint-url ENDPOINT /path s3://BUCKET/ |

| Restore MariaDB | mariabackup-restore.sh copy-back /path/to/mariadb_tmp/ |

Backup Type Comparison

Choose the right backup method for your situation:

| Feature | MariaBackup (Option 4/6) | mysqldump (Option 7) |

|---|---|---|

| Backup Speed | Fast (physical copy) | Slower (logical dump) |

| Restore Speed | Fast | Slower |

| Cross-Version | No (same major version only) | Yes |

| Users/Permissions | Yes (full MySQL system) | Per-database only |

| File Size | Larger (full datadir) | Smaller (SQL text) |

| Best For | Same-version migrations | Version upgrades, selective restore |

Decision Guide

Migrating to a new server with the same MariaDB major version? Use MariaBackup (Option 3/4). Different versions (e.g., 10.3 → 10.6)? Use mysqldump (Option 7).

SSH Key Management (Submenu 1)

The SSH key manager (keygen.sh) provides full lifecycle management for SSH keys used in remote server operations. SSH key authentication is required for data transfers and migrations.

Available Operations

| Option | Description |

|---|---|

| 1. List Registered Keys | Shows all registered SSH keys on the system |

| 2. Register Existing Key | Register an existing SSH key (provide private and public key paths) |

| 3. Create New Key | Generate a new key (rsa, ecdsa, or ed25519) and install on remote host |

| 4. Use Existing Key | Configure an existing key for a remote host connection |

| 5. Rotate Key | Replace the current key with a newly generated one |

| 6. Delete Key | Remove an SSH key for a specified remote host |

| 7. Export Key | Export a key to a specified file path |

| 8. Backup All Keys | Create a backup of all SSH keys to a directory |

| 9. Enable Root Login (Cloud VPS) | Enable root SSH on OVH/Hetzner/cloud VPS via sshd_config.d drop-in |

Creating a New SSH Key

When creating a new key (Option 3), you will be prompted for:

| Prompt | Description | Default |

|---|---|---|

| Key type | rsa, ecdsa, or ed25519 | ed25519 |

| Remote IP address | IP of the remote host | — |

| Remote SSH port | SSH port number | 22 |

| Remote SSH username | Username for remote connection | root |

| Key comment | Unique identifier for the key | — |

Cloud VPS Support

For OVH, Hetzner, or other cloud VPS providers that disable root SSH login by default, Option 9 enables root login via the sshd_config.d drop-in approach. Supported sudo usernames: almalinux, rocky, opc, cloud-user.

Command Line Usage

# Generate new SSH key for remote host

keygen.sh gen ed25519 192.168.1.10 22 root mykey-comment

# Rotate existing SSH key

keygen.sh rotatekeys ed25519 192.168.1.10 22 root mykey-comment keyname

AWS CLI S3 Profiles (Submenu 2)

Manage AWS CLI S3 profile credentials for S3-compatible storage providers. Required for S3 upload/download/sync operations (Submenus 9–13).

Creating a New S3 Profile

When creating a new profile (Option 3), you provide:

| Prompt | Description |

|---|---|

| S3 storage provider | AWS, Cloudflare R2, Backblaze, DigitalOcean, etc. |

| Profile name | Unique name (e.g., r2, b2, do) |

| Endpoint URL | S3-compatible endpoint for the provider |

| Access Key | Your access key ID |

| Secret Key | Your secret access key |

| Default Region | Region code (e.g., us-east-1, auto) |

S3 Provider Endpoint Reference

| Provider | Profile | Endpoint URL |

|---|---|---|

| Amazon S3 | aws | https://s3.REGION.amazonaws.com |

| Cloudflare R2 | r2 | https://ACCOUNT_ID.r2.cloudflarestorage.com |

| Backblaze B2 | b2 | https://s3.REGION.backblazeb2.com |

| DigitalOcean Spaces | do | https://REGION.digitaloceanspaces.com |

| Linode Object Storage | linode | https://REGION.linodeobjects.com |

| Vultr Object Storage | vultr | https://REGION.vultrobjects.com |

| Wasabi | wasabi | https://s3.REGION.wasabisys.com |

| UpCloud | upcloud | https://REGION.upcloudobjects.com |

Command Line Usage

# Install AWS CLI and create a profile

awscli-get.sh install PROFILE ACCESS_KEY SECRET_KEY REGION OUTPUT

# Update AWS CLI

awscli-get.sh update

Server Migration (Submenu 3)

Complete server migration with automatic backup and transfer. This combines backup (Option 4 or 7) with SSH tunnel transfer to move all data to a new Centmin Mod server.

Important

Migrations are not finalized until you change the domain's DNS records. You can perform test migrations on test servers until you are comfortable with the final move.

Pre-Migration Checklist

- Both servers have Centmin Mod installed

- CSF Firewall allows traffic between servers (

csf -a REMOTE_IPon both) - SSH key authentication is configured (Submenu 1)

- Sufficient disk space on both servers

- MariaDB versions documented on both servers

- Test migration planned (do not change DNS until verified)

Migration Workflow

- Select backup method: MariaBackup (same DB version) or mysqldump (different versions)

- Optional compression: tar + zstd for faster transfers

- Backup created at

/home/databackup/TIMESTAMP/ - Transfer via SSH: Configure tunnel-transfers.sh parameters

- Restore on destination: Extract, compare, restore files and database

- Verify: Test nginx config (

nginx -t), check sites - Update DNS when ready to go live

Command Line Usage

# Step 1: Create full backup with compression

backups.sh backup-all-mariabackup comp 2>&1 | tee backup-all.log

# Step 2: Get backup directory from log

transfer_backup_dir=$(grep 'Backup Log saved: ' backup-all.log \

| awk '{print $10}' | xargs dirname)

# Step 3: Transfer to remote server via SSH

tunnel-transfers.sh \

-p 22 \

-u root \

-h 123.123.123.123 \

-m nc \

-b 262144 \

-l 12345 \

-s ${transfer_backup_dir} \

-r /home/remotebackup \

-k /root/.ssh/my1.key

MariaBackup Backups (Submenu 4 & 6)

MariaBackup creates physical/binary backups of MariaDB databases. This is the fastest method for backup and restore, but requires the same MariaDB major version on source and destination.

Submenu 4: Vhosts + MariaBackup (Full Backup)

Creates a complete backup of website files AND database. Includes:

- Nginx configuration files and vhost directories

- Centmin Mod configuration (

/etc/centminmod/) - MariaDB physical backup (all databases, users, permissions)

- Optional tar + zstd compression

- MariaBackup restore script included in backup

Submenu 6: MariaBackup Only

Database-only backup without website files. Useful for daily database backups.

Command Line Usage

# Full backup: Vhosts + MariaBackup (with compression)

backups.sh backup-all-mariabackup comp

# Full backup: Vhosts + MariaBackup (no compression)

backups.sh backup-all-mariabackup

# MariaBackup only (with compression)

backups.sh backup-mariabackup comp

# MariaBackup only (no compression)

backups.sh backup-mariabackup

# Vhosts only (with compression)

backups.sh backup-files comp

Custom Directories

You can extend the default backup directories by creating /etc/centminmod/backups.ini. Add custom paths to DIRECTORIES_TO_BACKUP and DIRECTORIES_TO_BACKUP_NOCOMPRESS arrays.

mysqldump Backups (Submenu 7)

Logical/SQL dump backup using mysqldump. Slower than MariaBackup but cross-version compatible, making it the right choice for MariaDB version upgrades.

Key Features

- Uses the faster

--tabdelimited backup option - Creates separate

.sqlschema structure files and.txtdata files per table - Schema files are uncompressed; data files can be zstd compressed

- Generates a

restore.shscript in the backup directory - If database name already exists on restore, creates

_restorecopy_datetimestampto prevent overwrites

Command Line Usage

# mysqldump backup (with compression)

backups.sh backup-all comp

# mysqldump backup (no compression)

backups.sh backup-all

# mysqldump only - no vhosts

backups.sh backup-mysql

SSH Tunnel Transfers (Submenu 8)

High-speed data transfer via SSH using netcat (nc) or socat tunnels with zstd compression. Transfers at speeds near network and disk line rates.

tunnel-transfers.sh Parameters

| Flag | Description | Default |

|---|---|---|

| -p | SSH port | 22 |

| -u | SSH username | root |

| -h | Remote hostname/IP | — |

| -m | Tunnel method (nc or socat) | nc |

| -b | Buffer size (bytes) | 131072 |

| -l | Listen port | 12345 |

| -s | Source directory | — |

| -r | Remote destination | — |

| -k | SSH private key path | — |

Example

# Transfer backup directory to remote server

tunnel-transfers.sh \

-p 22 \

-u root \

-h 123.123.123.123 \

-m nc \

-b 262144 \

-l 12345 \

-s /home/databackup/070523-072252 \

-r /home/remotebackup \

-k /root/.ssh/my1.key

Performance Benchmarks

| Method | Distance | Expected Speed |

|---|---|---|

| SSH tunnel (nc) | Same datacenter | 300–500 MB/s |

| SSH tunnel (nc) | Cross-region | 50–150 MB/s |

| SSH tunnel (socat) | Any | Similar to nc, better for unstable connections |

| S3 sync | Cloud provider | 50–200 MB/s |

S3 Uploads & Downloads (Submenus 9–13)

Transfer data to and from S3-compatible storage providers using AWS CLI. Requires a configured S3 profile (Submenu 2).

S3 Operations

| Submenu | Operation | AWS CLI Command |

|---|---|---|

| 9 | Sync directory to S3 | aws s3 sync |

| 10 | Upload files to S3 | aws s3 cp |

| 11 | Download from S3 | aws s3 cp (from S3) |

| 12 | S3 to S3 transfer | aws s3 cp (S3 to S3) |

| 13 | List S3 buckets | aws s3 ls |

S3 Command Examples

# Sync directory to Cloudflare R2

aws s3 sync --profile r2 \

--endpoint-url https://ACCOUNT_ID.r2.cloudflarestorage.com \

/home/databackup/070523-072252 s3://BUCKET/

# Upload file to S3

aws s3 cp --profile PROFILE \

--endpoint-url ENDPOINT \

/path/to/file.tar.zst s3://BUCKET/

# Download from S3

aws s3 cp --profile PROFILE \

--endpoint-url ENDPOINT \

s3://BUCKET/file.tar.zst /home/localdirectory/

# List buckets

aws s3 ls --profile PROFILE --endpoint-url ENDPOINT

Restore Procedures

After transferring backup data to the destination server, follow these steps to restore.

Step 1: Extract the Backup

# Create restore directory and extract (tar 1.31+ with zstd)

mkdir -p /home/restoredata

tar -I zstd -xf /home/remotebackup/centminmod_backup.tar.zst \

-C /home/restoredata

Step 2: Understand Backup Structure

| Backup Path | Original Location | Contents |

|---|---|---|

| /home/restoredata/etc/centminmod | /etc/centminmod | Centmin Mod configs |

| /home/restoredata/etc/pure-ftpd | /etc/pure-ftpd | Virtual FTP user database |

| .../TIMESTAMP/domains_tmp | /home/nginx/domains | Nginx vhost directories |

| .../TIMESTAMP/mariadb_tmp | /var/lib/mysql | MariaDB data + restore script |

| /home/restoredata/root/tools | /root/tools | Centmin Mod tools |

| /home/restoredata/usr/local/nginx | /usr/local/nginx | Nginx installation |

Step 3: Compare Before Restoring

# Compare backup vs destination before restoring

diff -ur /etc/centminmod /home/restoredata/etc/centminmod/

diff -ur /usr/local/nginx /home/restoredata/usr/local/nginx/

diff -ur /root/tools /home/restoredata/root/tools/

Step 4: Backup New Server Files First

Always Backup Before Overwriting

Before restoring, backup the new server's existing configuration files so you can revert if needed.

# Backup new server configs before overwriting

\cp -af /usr/local/nginx /usr/local/nginx_original

\cp -af /etc/centminmod/custom_config.inc \

/etc/centminmod/custom_config.inc.original

\cp -af /etc/my.cnf /etc/my.cnf.original

\cp -af /root/.my.cnf /root/.my.cnf.original

Step 5: Restore Files

# Restore configuration files

\cp -af /home/restoredata/etc/centminmod/* /etc/centminmod/

\cp -af /home/restoredata/usr/local/nginx/* /usr/local/nginx/

\cp -af /home/restoredata/root/tools/* /root/tools/

# Restore Nginx vhost data (replace TIMESTAMP)

\cp -af /home/restoredata/home/databackup/TIMESTAMP/domains_tmp/* \

/home/nginx/domains/

# Restore cronjobs

crontab /home/remotebackup/cronjobs_tmp/root_cronjobs

Step 6: Restore MariaDB Database

The mariabackup-restore.sh script is included in each MariaBackup backup:

| Option | Behavior |

|---|---|

| copy-back | Copies backup files to /var/lib/mysql, preserves backup |

| move-back | Moves backup files to /var/lib/mysql, removes backup (saves disk space) |

# Restore MariaDB (replace TIMESTAMP with actual value)

mariabackup-restore.sh copy-back \

/home/restoredata/home/databackup/TIMESTAMP/mariadb_tmp/

# Restore MySQL credentials

\cp -af /home/restoredata/root/.my.cnf /root/.my.cnf

# Verify Nginx config

nginx -t

# Reload Nginx

ngxreload

Version Mismatch

The mariabackup-restore.sh script validates MariaDB versions and aborts if they do not match. Use mysqldump for cross-version migrations.

Recommended Backup Schedule

| Backup Type | Frequency | Command |

|---|---|---|

| Full (Vhosts + MariaBackup) | Weekly | backups.sh backup-all-mariabackup comp |

| MariaBackup Only | Daily | backups.sh backup-mariabackup comp |

| Vhosts Only | Daily | backups.sh backup-files comp |

| mysqldump | Before upgrades | backups.sh backup-all comp |

| S3 Offsite Sync | Daily | aws s3 sync ... |

3-2-1 Backup Rule

Keep 3 copies of your data on 2 different storage media with 1 offsite copy (S3-compatible storage). Use zstd compression for 60–80% size reduction.

Need help with backups or migrations?

Join the community forums for data management help and migration tips.